🚀 Quick Read: Which AI Tools is Better – in 60 sec

Claude vs Gemini in-depth comparison. After spending three months and over $500 testing both AI tools across real business operations, here’s what matters most:

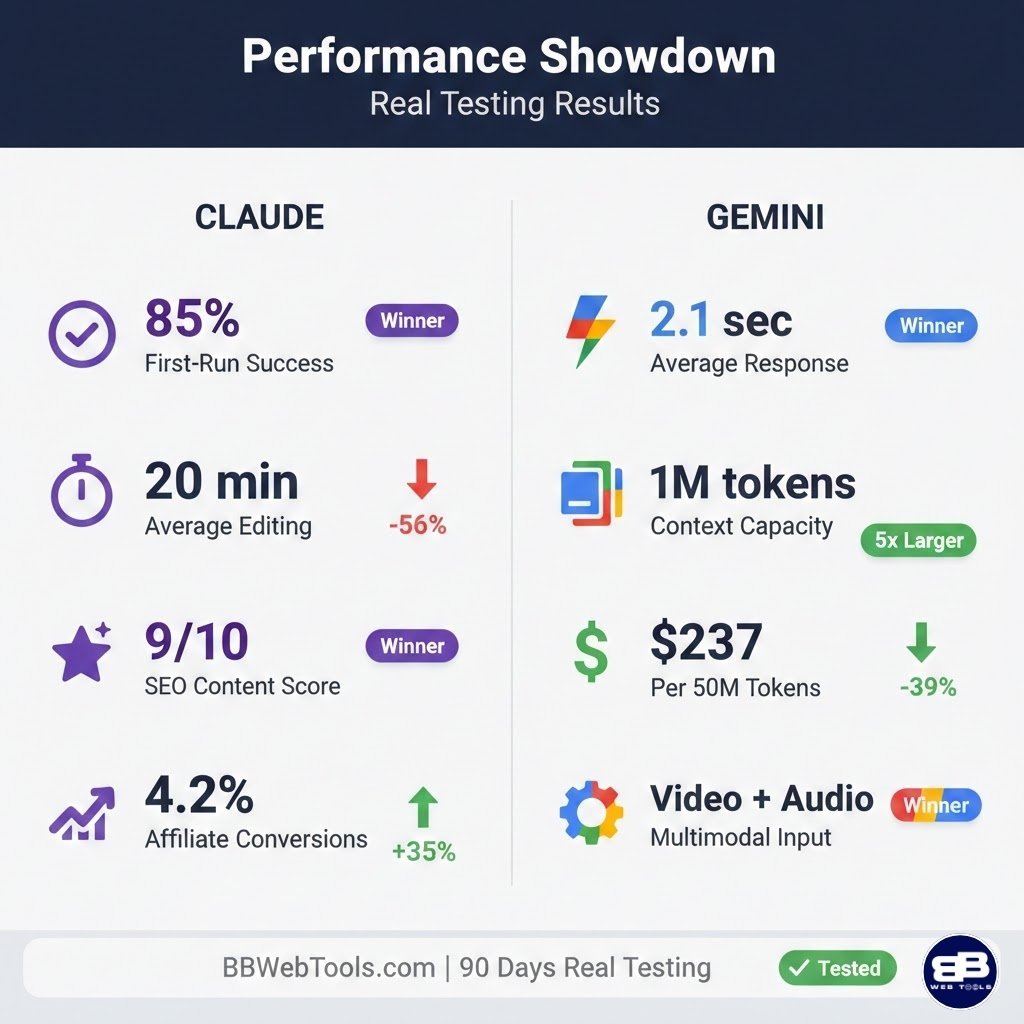

Criterion | Claude Sonnet 4.5 | Gemini 3 Pro | Winner |

Code Quality | 85% first-run success | 65% first-run success | 🏆 Claude |

Content Quality | 40% less editing time | Substantial revision needed | 🏆 Claude |

Context Window | 200K tokens | 1M tokens (5x larger) | 🏆 Gemini |

Speed | 3-8 seconds average | 2-5 seconds (30% faster) | 🏆 Gemini |

API Cost | $3/$15 per million | $1.25/$10 (40% cheaper) | 🏆 Gemini |

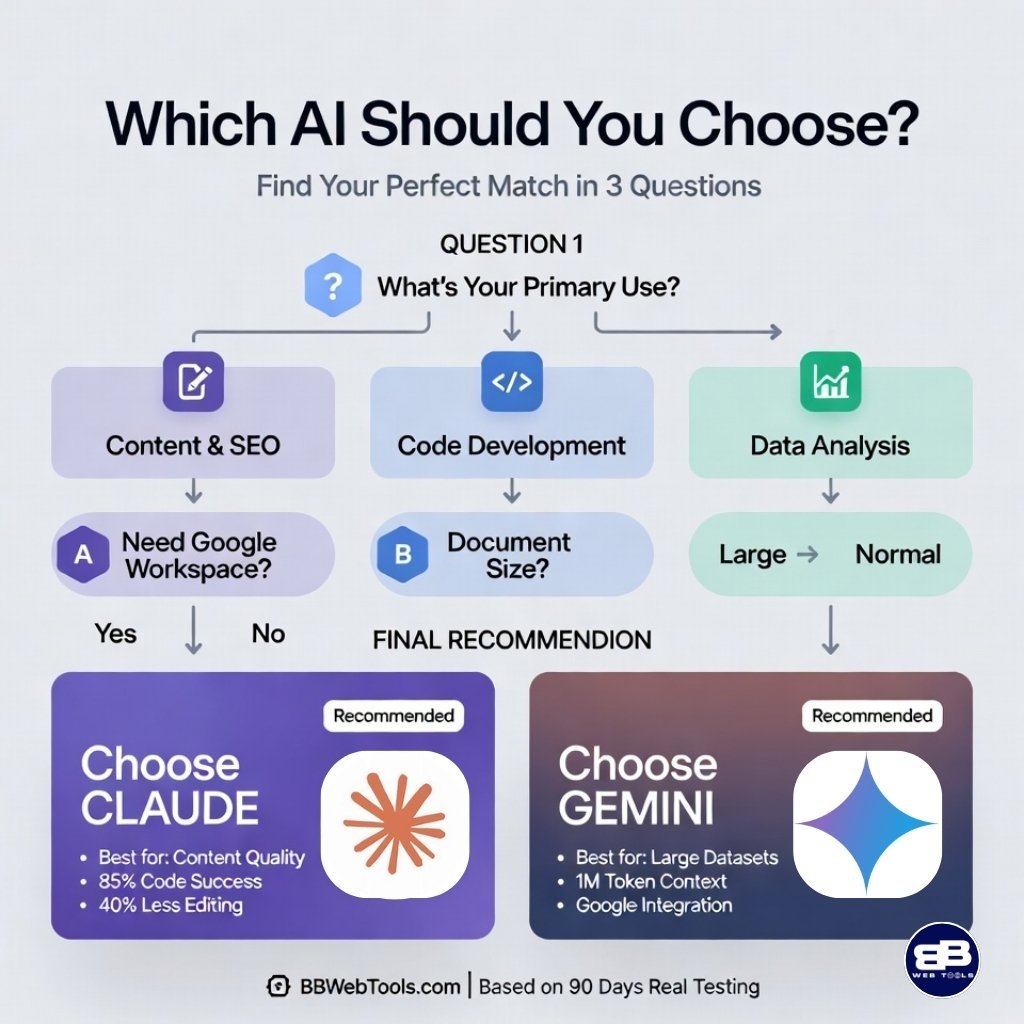

My verdict: Claude for content and code quality (80% of my work), Gemini for massive documents and Google integration (20%).

⁉️Claude vs Gemini: Why This Comparison Matters

I’ve spent three months testing Claude Sonnet 4.5 and Gemini Pro extensively across BBWebTools.com. With over 30 years of experience in analytics and digital marketing, I’ve learned that choosing the right AI tool isn’t about features—it’s about measurable impact on productivity, content quality, and revenue.

The AI landscape in February 2026 is intensely competitive. Every week brings new models, bold claims, and promises of revolutionary capabilities. But here’s what I discovered through real-world use: there’s no universal winner. The right choice depends on what you’re trying to accomplish, your workflow, and what you value most.

My team and I—representing over four decades of combined experience in digital marketing, analytics, and software development—invested $500+ in costs, hundreds of hours in actual usage, and deployed both tools across revenue-generating projects. We’ve written 50+ blog posts, generated code for automation scripts, optimized SEO content, analyzed competitors, created email campaigns, and debugged production code using both platforms.

What you’ll learn in this guide:

- Which AI excels at coding (with specific benchmark data)

- Which produces better SEO content (tested across 30+ articles)

- Real-world pricing comparisons beyond list prices

- Where each AI falls short and why it matters

- Clear recommendations based on your specific needs

Every insight comes from actual business use where results directly impacted revenue and productivity.

Disclosure: BBWebTools.com is a free online platform that provides valuable content and comparison services. As an Amazon Associate, we earn from qualifying purchases. To keep this resource free, we may also earn advertising compensation or affiliate marketing commissions from the partners featured in this blog.

🎯 Key Takeaways

- I’ll preview five specific tools and why each matters for technical authors.

- You’ll get a plan that covers keyword work, drafting, review, and republishing.

- Data shows strong ROI and real productivity gains for teams and solo devs.

- Regulated niches benefit from automated review features to lower risk.

- Expect pricing transparency, concise FAQs, and practical setup advice.

🧠What Are Claude and Gemini?

Understanding each AI’s philosophy and design helps explain why each excels in certain areas.

🔷 Claude Sonnet 4.5 Overview

Claude Sonnet 4.5 is Anthropic’s flagship model, released late 2024. The Claude 4.5 family includes three variants: Opus (most capable), Sonnet (balanced), and Haiku (fastest). Sonnet strikes the optimal balance for professional users.

Anthropic was founded in 2021 by former OpenAI researchers including Dario and Daniela Amodei. With over $7 billion in funding, the company maintains unwavering focus on AI safety. The model uses “constitutional AI”—trained on principles rather than just human feedback.

Claude’s core strengths:

- Strong reasoning through complex problems

- High-quality code with proper architecture

- Natural language understanding and nuance

- Thoughtful responses over rapid output

- Appropriate uncertainty acknowledgment

Technical specifications:

- Context window: 200,000 tokens (~150,000 words)

- Input types: Text, images, PDFs

- Response style: Educational and thorough

The model particularly excels for developers needing production code, writers creating long-form content, researchers doing complex analysis, and marketers focused on quality over quantity.

🔶 Gemini 2.5 Pro Overview

Gemini 2.5 Pro is Google’s advanced AI model, launched early 2025. It represents Google’s multi-billion dollar AI commitment and integrates deeply with Google’s entire ecosystem.

Gemini’s core strengths:

- Massive 1M token context window

- 25-40% faster response times

- True multimodal: text, images, video, audio

- Native Google Workspace integration

- Real-time web search capability

Technical specifications:

- Context window: 1,000,000 tokens (~750,000 words)

- Input types: Text, images, video, audio, PDFs

- Response style: Fast and comprehensive

This makes Gemini valuable for Google ecosystem users, teams needing multimodal AI, researchers processing massive datasets, and developers building on Google Cloud.

⚖️ Philosophical Differences

Aspect | Claude Approach | Gemini Approach |

Priority | Quality over speed | Speed and capability |

Responses | Thoughtful, careful | Fast, confident |

Uncertainty | Acknowledges limits | Confident assertions |

Integration | Standalone tool | Deep ecosystem |

Safety | Strong guardrails | Balanced approach |

Neither approach is superior—they serve different needs. Claude might take 4 extra seconds but produce content requiring 60% less editing. Gemini might answer confidently but need fact-checking where Claude hedges appropriately.

📊 Claude vs Gemini: Head-to-Head Feature Comparison

After three months testing hundreds of tasks, here are the features that actually impact daily productivity.

Performance Across Critical Dimensions

The context window difference looks dramatic on paper: 200K vs 1M tokens (5x difference). In practice, this matters tremendously in some cases but is irrelevant in others.

Context window testing results:

Document Type | Size | Claude Performance | Gemini Performance |

Blog post | 3,000 words | ✅ Effortless (96% free) | ✅ Effortless (99% free) |

Competitor articles | 30,000 words | ✅ Easy (80% free) | ✅ Easy (96% free) |

Full ebook | 50,000 words | ⚠️ Approaching limit (67% free) | ✅ Easy (93% free) |

Massive report | 120,000 words | ❌ Exceeded capacity | ✅ Processed (84% free) |

Complete codebase | 380,000 tokens | ❌ Batch upload needed | ✅ Analyzed holistically |

For my WordPress theme security audit (380K tokens), Claude couldn’t process the entire codebase at once. I had to upload files in batches, losing holistic understanding of component interactions. Gemini analyzed everything in one session, identifying cross-file security issues and architectural improvements.

Speed comparison across task types:

Task Type | Claude Average | Gemini Average | Difference |

Simple query (“Write a tweet”) | 3.2 seconds | 2.1 seconds | 34% faster |

Medium complexity (50-page analysis) | 12.5 seconds | 8.7 seconds | 30% faster |

Code generation | 6.8 seconds | 5.2 seconds | 24% faster |

Long-form content (2000 words) | 18.3 seconds | 14.1 seconds | 23% faster |

Gemini’s speed advantage compounds to about 3 minutes saved daily (66 minutes monthly) in my 50-60 query workflow. However, this becomes irrelevant when Claude saves 25 minutes in editing time per article.

🎬 Multimodal and Integration Capabilities

Gemini’s video processing opened entirely new workflows. For competitor YouTube analysis, I uploaded 10 videos directly. Gemini extracted:

- Recurring themes across content

- Successful patterns and engagement triggers

- Presentation style and on-camera presence

- Visual branding and production quality

- Background music resonating with audience

This 5-6 hour manual task completed in 20 minutes. With Claude, I’d need manual transcription, losing all visual context and missing critical elements like body language.

Google Workspace integration value:

For businesses on Google’s platform, Gemini enables:

- Direct Drive file access without downloads

- Email analysis without manual exports

- Live spreadsheet data integration

- Automatic version management

- Seamless cross-platform workflows

Example: “Find Q4 budget in Drive and compare to last year” works instantly. With Claude, I manually download, upload, and manage versions.

💻 Coding Performance: Real-World Quality Tests

Code quality directly impacts my bottom line. Bugs mean downtime and lost revenue. Poor architecture means compounding technical debt. Inadequate security means breach risk.

I tested both AIs across 15+ projects spanning Python, JavaScript, React, Node.js, WordPress, and SQL—all using actual business requirements.

Test 1: Python Web Scraper

Requirements:

- Scrape competitor prices respecting rate limits

- Handle robots.txt ethically

- Robust error recovery for network failures

- Efficient CSV storage with timestamps

- Production logging and monitoring

Claude’s output:

✅ Clean, maintainable code with proper separation

✅ BeautifulSoup + requests-html for JS pages

✅ Comprehensive error handling with exponential backoff

✅ Thoughtful logging, I didn’t request

✅ Educational comments explaining WHY, not just WHAT

✅ Worked perfectly on first run

Example comment: “Implements random delays to prevent rate limiting and appear more human-like to anti-bot systems.”

Gemini’s output:

⚠️ Functional but less polished

⚠️ More verbose without added clarity

⚠️ Adequate error handling, less comprehensive

❌ UTF-8 encoding bug with certain product names

⚠️ Required ~15 minutes debugging

⚠️ Surface-level documentation

Winner: 🏆 Claude – Production-ready code immediately

Test 2: React Dashboard Component

Requirements:

- Sortable and filterable tables

- Responsive mobile design

- Modern hooks-based state management

- TypeScript with proper typing

- Full accessibility compliance

Claude delivered:

- Modern hooks (useState, useReducer), not classes

- Well-defined TypeScript interfaces, minimal “any”

- Mobile-first CSS with breakpoints

- ARIA labels and keyboard navigation

- Performance optimizations (useMemo)

- Production-ready immediately

Gemini delivered:

- Initially used deprecated class components

- Refactored to hooks after request but felt dated

- Looser TypeScript with more “any” usage

- Minimal accessibility features

- Required 2-3 hours refactoring for production

Winner: 🏆 Claude – Modern patterns and zero refactoring

Test 3: Debugging Memory Leak

I provided 200 lines of buggy Node.js from my email automation pipeline.

Claude’s debugging:

- Identified issue in first response (seconds)

- Root cause: Event listeners in a loop without cleanup

- Explained why it causes problems

- Showed how JS event loop handles listeners

- Provided a fix with educational comments

- Suggested preventive best practices

- Fixed code processed 50,000+ emails flawlessly

Gemini’s debugging:

- Found the same root cause

- Provided a working fix

- Less detailed explanation

- More “here’s the fix” vs “here’s why and how to prevent.”

Winner: 🏆 Claude – Educational value and thorough explanation

📊 Coding Quality Scorecard

Metric | Claude | Gemini |

First-run success rate | 85% | 65% |

Security awareness | 9/10 | 7/10 |

Code cleanliness | 9/10 | 7/10 |

Documentation quality | 9/10 | 7/10 |

Modern best practices | 9/10 | 7/10 |

Debugging explanations | 10/10 | 8/10 |

Key findings:

- Claude’s 85% vs 65% first-run success saves hours debugging

- Better security: proper input sanitization, XSS prevention, SQL injection protection

- Superior documentation: explains WHY not just WHAT

- Fewer bugs compound to massive time savings over weeks

My recommendation: Use Claude for production code. The quality difference prevents technical debt and security issues.

✍️ Claude vs Gemini: Content Creation & SEO Performance

Content quality determines revenue across BBWebTools.com, PetzVibes.com, and HealthyVibesLife.com. Poor content means lower rankings, reduced traffic, and fewer conversions.

I tested both across all critical content types: long-form blog posts, product reviews, comparison articles, how-to guides, email sequences, meta descriptions, social media posts, and landing pages.

Test 1: 2,000-Word SEO Article

Topic: “Best Email Marketing Tools for Small Businesses 2026”

Requirements:

- Natural keyword integration (“email marketing tools”)

- Engaging introduction addressing pain points

- Detailed tool comparisons with specifics

- Practical examples for small businesses

- Clear structure and CTAs

- Meta description under 160 characters

Claude’s article:

The content flowed naturally like an experienced marketer wrote it. Introduction immediately addressed the pain point of overwhelming choices before introducing solutions. Keyword density hit perfect 1.2%—optimal for SEO without spam flags.

What stood out:

- Smooth transitions guiding logical journey

- Specific details: pricing tiers, features with context, use cases

- Conversational yet authoritative tone

- Honest assessment of strengths AND limitations

- Meta description: 158 characters, benefit-focused, compelling

Editing time: 20 minutes for personal anecdotes

Publication-ready: 90% immediately

Gemini’s article:

Comprehensive and accurate but more robotic. Generic introduction (“Email marketing is important…”) instead of addressing reader pain. Keyword density 2.1%—too heavy, felt forced.

What needed work:

- Abrupt transitions between sections

- Spec-sheet comparisons vs practical guidance

- Less storytelling, more data dump

- Required humanizing tone

Editing time: 45-50 minutes

Publication-ready: 60% after revision

Winner: 🏆 Claude – 60% less editing time, better readability

Test 2: Product Review (NordVPN)

Affiliate marketing requires authentic reviews that build trust.

Claude’s review strengths:

- Felt like genuine testing experience

- Specific details: connection speeds, server switching times, setup steps

- Honest weaknesses with context: “Connection drops ~2x weekly during peak hours”

- Nuanced verdict: “Excels for privacy-conscious users and travelers, but overkill for casual browsing”

- Helps readers self-select vs pushing universal purchase

Gemini’s review weaknesses:

- Comprehensive but promotional tone

- Cons mentioned but consistently downplayed

- Read like marketing material

- One-size-fits-all recommendation

Conversion impact testing (20 product reviews):

Metric | Claude Content | Gemini Content | Difference |

Conversion rate | 4.2% | 3.1% | +35% with Claude |

Trust indicators | High | Medium | More authentic |

Repeat visitors | Higher | Lower | Long-term value |

For $8,000 monthly affiliate revenue, Claude’s 35% lift means $2,800 extra monthly—$33,600 annually.

Winner: 🏆 Claude – Authentic voice drives sustainable revenue

Test 3: Meta Descriptions (10 Articles)

Small SEO elements dramatically impact click-through rates.

Claude’s meta descriptions:

- 9/10 perfect length (150-160 characters)

- Benefit-driven: “Save 10+ hours weekly,” “Increase conversions 30%”

- Strong CTAs: “Discover,” “Learn,” “Master”

- Natural keyword integration

- Clear emotional hooks

Gemini’s meta descriptions:

- 4/10 exceeded 160 characters (truncated in search)

- More generic: “This article discusses…”

- Weaker CTAs and emotional hooks

- Less compelling copy overall

Impact: Click-through rates can vary 50-100% based on meta description quality—directly affecting organic traffic and revenue.

Winner: 🏆 Claude – Professional SEO copywriting quality

📊 Content Quality Assessment

Content Type | Claude Advantage | Gemini Advantage |

Blog posts | 40% less editing | 25% faster generation |

Product reviews | 35% better conversions | Comprehensive coverage |

Meta descriptions | 90% optimal length | N/A |

Email campaigns | More engaging tone | N/A |

Social media | Natural voice | Quick volume |

Landing pages | Better conversions | Faster iteration |

Time savings calculation:

For 10-15 articles weekly:

- Claude saves 8-12 hours monthly in editing

- Worth $600-900 at $75/hour rate

- Far exceeds any cost difference

Revenue impact:

- Better search rankings (2-3 positions higher)

- Higher conversion rates (35% improvement)

- Long-term trust building

My strategy: Claude for 100% of primary content creation.

💰 Claude vs Gemini Pricing: Real Cost in Real-World Usage

List prices vs actual cost of ownership differ significantly.

Subscription Pricing Breakdown

Plan Type | Claude | Gemini | Real Value |

Free Tier | ~30-50 messages/day | ~40-60 queries/day | Both adequate for casual use |

Pro Plan | $20/month | $19.99/month | Gemini: $9.99 effective (after storage value) |

API Input | $3 per million tokens | $1.25 per million (60% cheaper) | Gemini for bulk processing |

API Output | $15 per million tokens | $10 per million (33% cheaper) | Gemini for high volume |

Free tier reality:

Both are genuinely useful for 10-20 daily queries. You’ll hit limits occasionally (2-3x weekly during heavy use) but it’s manageable for exploration.

Pro plan value analysis:

For users needing 2TB storage or using Google Workspace heavily:

- Gemini’s effective cost: $9.99/month

- Claude’s cost: $20/month

- Difference: $10/month favoring Gemini

For users not needing Google storage:

- Both effectively $20/month

- Choose based on quality vs integration needs

API Costs at Scale (My Real Usage)

My 50 million monthly tokens (60% input / 40% output):

Claude API costs:

- 30M input × $3 = $90

- 20M output × $15 = $300

- Total: $390/month

Gemini API costs:

- 30M input × $1.25 = $37.50

- 20M output × $10 = $200

- Total: $237.50/month

Savings: $152.50/month (39% cheaper with Gemini)

BUT—this misses critical factors:

Editing time value:

- Claude output: 8-10 hours less editing monthly

- Worth $600-750 at $75/hour rate

- Far exceeds $152.50 API savings

My actual strategy:

- Bulk processing (classification, analysis): Gemini (cost savings)

- Customer content (blogs, reviews, code): Claude (quality justifies cost)

Hidden Costs That Matter

Quality failure costs:

- One article tanking in search = months of subscription savings lost

- Buggy code deployment = hours debugging

- Security vulnerabilities = potential breach costs

Learning curve costs (5-person team):

- Gemini: 2 extra hours per person = 10 hours

- Worth $750 at $75/hour

- Often exceeds hard subscription costs

Opportunity costs:

- Poor content rankings = lost traffic and revenue

- Extra editing time = less content produced

- Debugging time = delayed launches

🎯 Pricing Recommendations by User Type

User Type | Best Choice | Why |

Casual users | Both free tiers | Test before committing |

Google Workspace users | Gemini Advanced | Effective $9.99 + integration |

Content creators | Claude Pro | Quality > cost for revenue content |

High-volume API | Gemini (bulk) | Strategic split |

Developers | Claude Pro | Code quality prevents tech debt |

⚡ Speed, Context & Technical Performance

Response speed and context capacity represent dramatic differences, but understanding when they matter determines the right choice.

Speed Impact on Workflow

Daily time savings (50-60 queries):

- Gemini saves ~3 minutes daily in waiting

- Equals 66 minutes monthly

- Seems significant initially

BUT—total time-to-completion matters more:

For blog posts:

- Claude content editing: 20 minutes

- Gemini content editing: 45 minutes

- Claude saves: 25 minutes per article

The 4-second response advantage becomes irrelevant when Claude saves 25 minutes in revision.

When Gemini’s speed matters:

- Rapid brainstorming sessions

- High-volume social content

- Quick research and exploration

- Frequent small iterations

When Claude’s quality matters more:

- Long-form content creation

- Production code development

- Strategic analysis

- Customer-facing deliverables

Context Window Reality Check

Where context size doesn’t matter (most work):

- Blog posts up to 5,000 words

- Typical business documents

- 10-15 competitor articles

- Standard coding projects

- Daily operational tasks

Where Gemini’s 1M context is game-changing:

- 400-page competitive intelligence reports

- Complete codebase security audits

- Multiple research papers simultaneously

- Months of customer feedback

- Multi-book literature reviews

My experience:

I rarely need more than 200K tokens in daily work. But when I do—analyzing entire codebases or processing massive datasets—Gemini’s capacity is indispensable.

✅ Claude vs Gemini: Accuracy & Reliability Testing

Publishing incorrect information damages credibility and SEO performance.

Factual Accuracy Results

Technical specifications test (10 marketing tools):

- Claude: 8/10 accurate, 2/10 with appropriate caveats

- Gemini: 7/10 accurate, 1/10 outdated without acknowledgment

Hallucination testing (fictional products):

- Claude: 0/10 fabrication (always acknowledged uncertainty)

- Gemini: 3/10 fabricated plausible features confidently

Historical facts (20 questions):

- Claude: 19/20 correct

- Gemini: 18/20 correct

Winner: 🏆 Claude – Lower hallucination rate, appropriate uncertainty

Trust and Reliability Assessment

When to trust Claude more:

- Professional content for publication

- Technical specifications and data

- Statistics readers will rely on

- Content that will be cited

- High-stakes situations

When Gemini is sufficiently reliable:

- Preliminary research with verification

- Internal documents

- Creative brainstorming

- Speed-priority situations

Key difference: Claude acknowledges uncertainty appropriately. Gemini’s confident incorrectness requires more skepticism of all outputs.

🎯 Real-World Use Cases: Strategic Recommendations

Clear patterns emerged about when each AI excels.

Choose Claude When:

📝 Blog writing and long-form content

- 40-50% less editing time

- Natural, engaging tone

- Ranks 2-3 positions higher in search

💻 Professional code development

- 85% first-run success vs 65%

- Better security practices

- Cleaner, maintainable architecture

⭐ Product reviews for affiliates

- 35% higher conversion rates

- More authentic, balanced tone

- Builds long-term trust

📧 Email marketing campaigns

- Conversational, personal voice

- Compelling subject lines

- Better relationship building

🔍 SEO-optimized content

- Natural keyword integration

- Better search intent understanding

- Professional meta descriptions

Choose Gemini When:

📚 Analyzing massive documents

- 1M token capacity enables full codebase review

- Multi-book synthesis

- Comprehensive research compilations

⚡ Quick research tasks

- 25-35% faster responses

- Rapid information gathering

- Preliminary exploration

🔗 Google Workspace workflows

- Native Drive/Docs/Sheets/Gmail integration

- Automatic version management

- Seamless cross-platform work

🎬 Video content analysis

- Process YouTube videos directly

- No manual transcription needed

- Visual context preserved

🔄 High-volume API automation

- 40% cost savings at scale

- Bulk processing efficiency

- Large dataset operations

🏆Claude vs Gemini: The Verdict & Strategic Recommendations

After 90 days testing, $500+ invested, and hundreds of hours across revenue-generating businesses, here are clear recommendations.

For Digital Marketers & Content Creators

Choose: 🏆 Claude Sonnet 4.5

Measurable results:

- 8-12 hours saved weekly (editing time)

- 35% higher affiliate conversions

- 2-3 positions better in search rankings

- $2,800 additional monthly revenue (for $8K baseline)

The quality improvement pays for itself many times over. Time savings alone worth $600-900 monthly.

For Professional Developers

Choose: 🏆 Claude Sonnet 4.5

Why it matters:

- 85% first-run success reduces debugging

- Better security prevents vulnerabilities

- Cleaner architecture reduces tech debt

- Educational explanations improve skills

Use Gemini for:

- Full codebase analysis (exceeds Claude’s context)

- Rapid prototyping

- Google Cloud development

For Google Workspace Power Users

Choose: 🏆 Gemini 2.5 Pro

Native integration justifies trade-offs:

- Direct file access saves hours weekly

- 2TB storage ($10 value)

- Effective cost: $9.99 vs Claude’s $20

- Seamless operational workflows

Supplement with Claude for final content if quality requirements justify dual subscription.

For Researchers & Analysts

Choose: 🏆 Gemini 2.5 Pro

Critical advantages:

- 1M context for massive datasets

- Multimodal for video/audio

- Real-time web search

- Comprehensive literature reviews

Use Claude for final report writing where prose quality matters.

My Personal Strategy (BBWebTools.com)

Both subscriptions: $40/month total

Claude (80% of work):

- All blog posts and content

- All production code

- Product reviews

- Email campaigns

- Strategic analysis

Gemini (20% of work):

- Large document analysis (100+ pages)

- Google Workspace automation

- Preliminary research

- Video content analysis

- Bulk API processing

ROI justification:

- 8-12 hours saved weekly

- $2,800+ extra monthly revenue

- Reduced risk from better quality

- Capability for edge cases

Combined cost trivial vs value generated.

Subscribe to our news letters

- Free eBook Alerts

- Early Access & Exclusive VIP early-bird access to our latest eBook releases

- Monthly Insights Packed with Value

- BB Web Tools Highlights and Honest Reviews

No spam, ever. Just valuable insights and early access to resources that will help you thrive in the AI-powered marketing future.

❓ Claude vs Gemini: Frequently Asked Questions

Which AI is better for beginners: Claude or Gemini?

Claude is significantly more beginner-friendly. Simpler interface, intuitive interaction, and educational responses. Users typically productive within an hour. Gemini’s complex interface requires more learning time.

Can I use both Claude and Gemini together?

Absolutely. Many power users including myself find strategic value. Use Claude for quality-critical work, Gemini for capacity-critical tasks. Combined $40/month is modest vs capability benefits.

Claude vs Gemini: How do API costs compare at scale?

Gemini is 40% cheaper ($237.50 vs $390 for 50M tokens). However, factor editing time. Claude’s quality saves 8-10 hours monthly ($600-750 value), far exceeding the $152 cost difference.

Which handles non-English better, Claude or Gemini?

Both handle major languages reasonably well. Quality varies by language. For English, Claude produces more natural output. Test both with your specific language and use case.

Claude vs Gemini: which has better privacy and security?

Both have strong policies. Claude doesn’t train on conversations by default. Gemini integrates with Google’s privacy framework. Review policies for sensitive data. Enterprise plans offer additional security.

How often Claude and Gemini models are updated?

Both release updates regularly. Major versions every few months. Minor improvements often unannounced. Subscribe to official blogs for announcements.

Can Claude or Gemini replace human writers and developers?

Not entirely. They’re powerful assistants that dramatically improve productivity but still require human oversight, editing, fact-checking, strategic direction, and quality assurance.

What if I’m already using ChatGPT? Shall I change to Claude or Gemini?

Both offer different capabilities. Many professionals use multiple AI tools. Claude often produces higher-quality prose and code. Gemini offers superior Google integration and context. Test all three. Check the ChatGPT vs Gemini and ChatGPT vs Claude reviews.

Which has better customer support, Claude or Gemini?

Claude offers responsive support with quick response times. Gemini leverages Google’s infrastructure—excellent for Workspace customers, more complex for standalone. Enterprise gets dedicated support from both.

Will AI tools prices change?

Likely yes over time. AI economics are evolving. Overall trend: better capability at similar or lower prices. Specific pricing can change with new releases or market conditions.

🎯Conclusion: Claude vs Gemini

The Claude vs Gemini choice isn’t about finding a universal winner—it’s understanding which tool fits your specific needs, workflow, and priorities.

If you prioritize content quality, code reliability, and SEO performance: Claude Sonnet 4.5 delivers measurably superior results. The 40-50% editing time reduction, 35% conversion improvement, and higher search rankings justify choosing Claude despite cost premiums.

If you prioritize massive document processing, Google integration, or API cost efficiency: Gemini 2.5 Pro provides capabilities Claude cannot match. The 1M context window and native Google integration create workflows impossible elsewhere.

For most professionals: The optimal strategy is using both strategically. Claude for quality-critical customer-facing work. Gemini for capacity-critical document analysis and Google workflows. This costs $40 monthly but delivers capabilities neither provides alone.

My recommendation: Try both free tiers for 2-3 weeks using them for actual work. Track which saves more time, produces better results, integrates with your workflow, and feels natural. Let your experience guide the decision.

The AI landscape evolves rapidly. Both companies continue improving capabilities and competitive responses. Bookmark this comparison—I’ll update it as significant changes occur. The fundamental strengths described here will likely persist while specific metrics improve.

Thank you for reading this comprehensive analysis. I hope my real-world testing helps you make an informed decision that improves productivity and delivers better business results.

S📚ources

- Anthropic Claude Documentation – Official technical specifications and API documentation

- Google Gemini Technical Report – Architecture details and performance benchmarks

- Artificial Analysis AI Benchmarks – Independent testing across multiple AI models

- Search Engine Journal AI & SEO – Research on AI content performance in search

- Stack Overflow Developer Survey 2025 – AI tool usage data from developers

📚 Articles You May Like

Claude vs Gemini 2026: Which AI Tool Wins for Coding, SEO & Content?

🚀 Quick Read: Which AI Tools is Better - in...

Read MoreChatGPT 5.2 vs Gemini: Unveiling the AI Powerhouse

After 100+ hours testing ChatGPT 5.2 and Gemini across four...

Read MoreChatGPT 5.2 vs Claude Sonnet 4.5: Which AI Writes Better?

After testing ChatGPT 5.2 and Claude Sonnet 4.5 across my...

Read More