Have you ever wondered why your smartphone’s voice assistant feels so different from the AI that beats chess champions?

I did too. I thought all tech worked the same way. But, I found out that artificial intelligence categories are much more varied than most people think.

We use these systems every day without realizing it. We ask Siri for directions, watch Netflix suggestions, and read about self-driving cars. These are just a few examples of what’s out there.

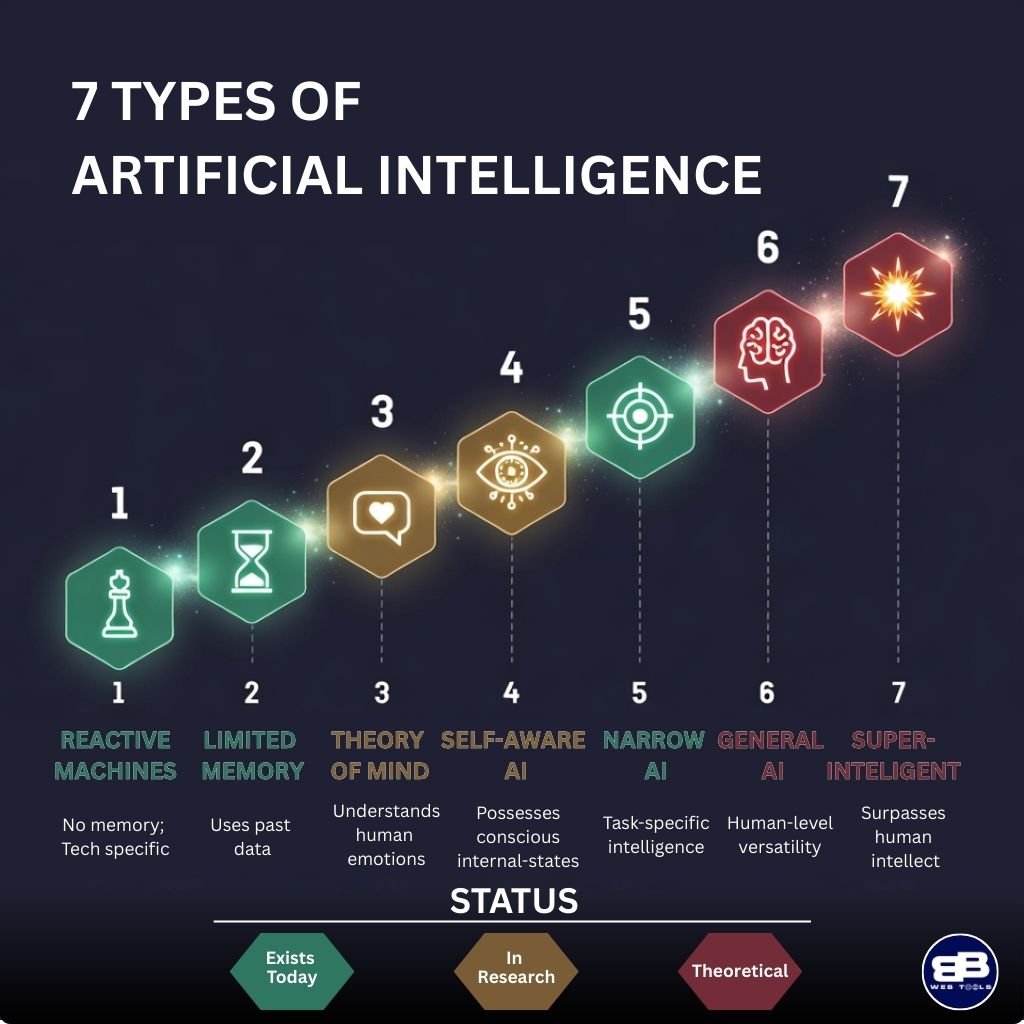

What really caught my attention was that there are seven distinct categories of intelligent technology. But, most of our conversations only focus on three.

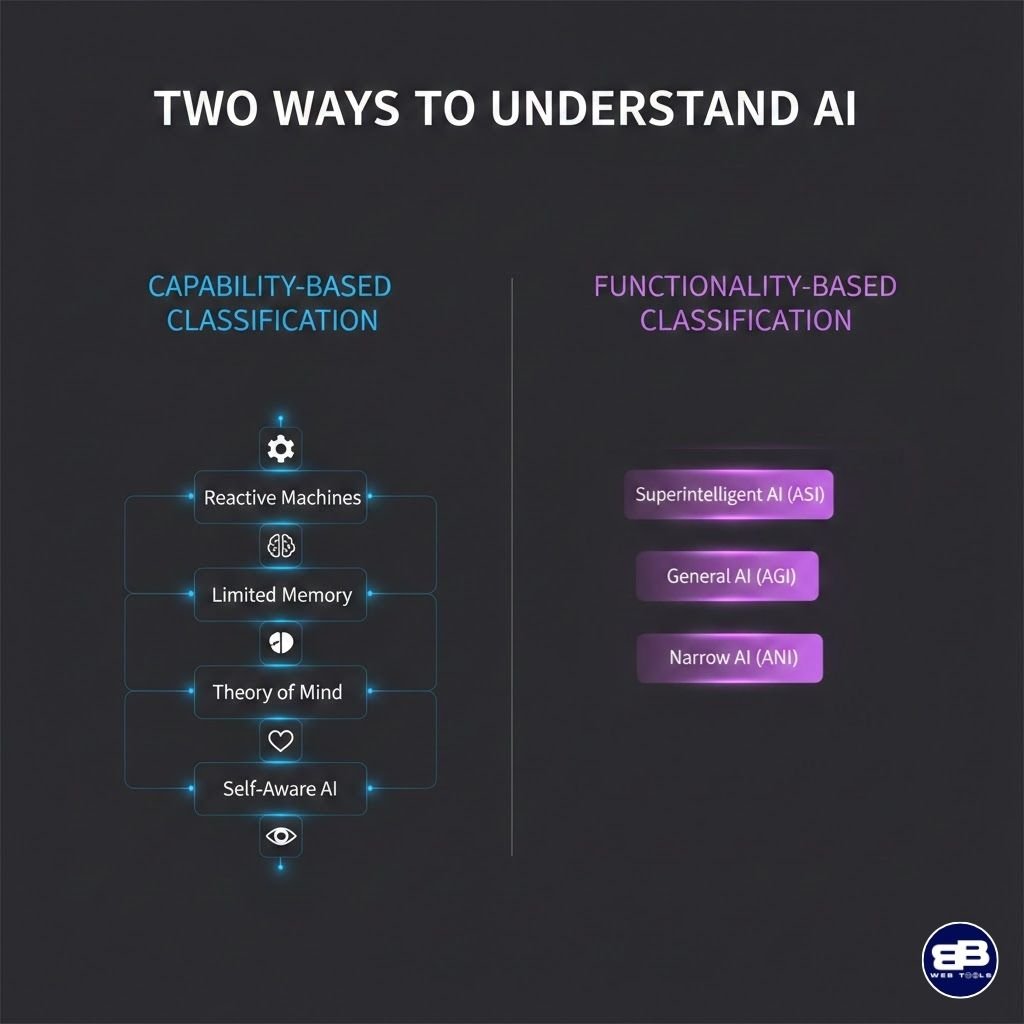

My work with different systems showed me two main ways to group them. One looks at what they can do, and the other at how they work. This changed how I see technology.

Some systems do simple tasks, while others try to think like humans. The difference is important.

Key Takeaways

- Artificial intelligence has seven distinct categories, not just one big technology

- Two systems organize intelligent systems: capability-based and functionality-based approaches

- Daily interactions with voice assistants and recommendation systems are just a small part of the whole picture

- Different systems can do vastly different things, from simple tasks to trying to think like humans

- Knowing these categories helps us see what each system can and can’t do

- Only three categories get talked about a lot, even though there are seven in technical terms

Why I Decided to Break Down the Different Types of AI

When I realized I didn’t get different kinds of artificial intelligence, everything changed. I was talking to a customer service bot online. I expected it to remember our conversation. But it asked me the same question for the third time, and I got really annoyed.

This made me realize I was treating the AI like it had human-like memory and learning. But it didn’t.

This experience made me dig deep into AI research for weeks. I found out “AI” is a broad term for many different technologies. Some AI can barely do simple tasks, while others can win complex games.

Learning about ai classification made me frustrated with how loosely the term “AI” is used. Tech companies often call basic automation and sophisticated machine learning “AI” without explaining the difference. Media also talks about AI breakthroughs without specifying which type of AI is involved.

This lack of clarity causes a lot of confusion. I’ve talked to friends and family who think AI is either way more advanced or limited than it really is. My neighbor thought her smartphone was conscious because it had “AI features.” My cousin believed self-driving cars were impossible because “computers can’t think.”

Both were wrong because they didn’t understand the different types of AI.

Understanding ai classification is important for real people making real decisions every day.

Developers need to know this when designing systems. Businesses need it when choosing AI tools. Policymakers need it for making sense regulations. Students learning AI need a clear understanding from the start.

The framework I found gave me a way to set realistic expectations. I could see what different AI systems could and couldn’t do. I stopped being disappointed when narrow AI didn’t act like human intelligence. I started to appreciate the amazing things even “simple” AI could do.

That’s why I wanted to write this breakdown. I’m sharing the framework I wish I had known three years ago. My goal is to help demystify AI by showing you the same system that helped me.

I’m not an expert lecturing from a high place. I’m a fellow learner who went through confusion and found insights to share. If my story can help you avoid the same mistakes, then this article has done its job.

The Two Classification Systems That Define All 7 Types of AI

I once felt lost in the world of AI categories. Then, I found out there are two main classification systems. At first, I saw many ways to group AI types, but they seemed to clash. Some articles talked about reactive machines, while others mentioned narrow AI and general AI.

This confusion vanished when I saw these systems as complementary. They answer different questions about AI.

Learning about both ai classification systems changed my view on AI. Each system highlights different aspects of AI’s capabilities.

How AI Thinks: The Capability Approach

The capability-based ai system classifications focus on AI’s cognitive processes. It’s like looking under the hood to see how the engine works.

This system breaks AI into four levels of thinking capability. Each level shows a significant leap in AI’s mental processes.

- Reactive Machines: These AI systems respond to inputs without memory. Think of them like a calculator that forgets everything after each calculation.

- Limited Memory AI: These systems can store temporary information and learn from recent experiences. Your smartphone’s facial recognition works this way.

- Theory of Mind AI: This level would understand human emotions and social contexts. We haven’t achieved this yet, but researchers are working toward it.

- Self-Aware AI: The highest capability level would involve genuine consciousness and self-awareness. This remains firmly in the realm of science fiction for now.

This classification is helpful because it shows AI’s intelligence levels. It’s like understanding the difference between a child learning to count and an adult mathematician solving complex equations.

What AI Can Accomplish: The Functionality Perspective

The functionality-based approach to ai classification looks at what AI can do in the real world. I use this framework when I want to understand the practical scope of an AI application.

This system divides AI into three categories based on capability breadth. Each category represents how many different tasks the AI can handle effectively.

| AI Type | Scope of Application | Current Status |

|---|---|---|

| Narrow AI (ANI) | Performs specific, limited tasks exceptionally well | Widely available today |

| General AI (AGI) | Matches human versatility across all cognitive tasks | Stil in development |

| Superintelligent AI (ASI) | Surpasses human intelligence in every domain | Theoretical future possibility |

The functionality framework helps me evaluate practical applications of AI technology. When someone asks what an AI system can do, I turn to this classification method. It answers questions about real-world problem-solving capacity.

Narrow AI dominates our current landscape. Every AI tool I use daily falls into this category. These systems excel at specific tasks but can’t transfer their knowledge to different domains.

The Power of Using Both Frameworks Together

My breakthrough moment came when I realized these two ai system classifications work together beautifully. They’re not competing theories. They’re complementary lenses for understanding artificial intelligence from different angles.

The capability framework tells me how an AI processes information. The functionality framework tells me what problems it can solve. Together, they give me a complete picture of any AI system.

Here’s a practical example that illustrates why both matter. Consider a self-driving car system. From the capability perspective, it’s a limited memory AI because it processes recent sensor data and learns from immediate experiences. From the functionality perspective, it’s narrow AI because it specializes in driving tasks only.

I use both systems because they answer different questions that matter for different purposes. When I’m evaluating how sophisticated an AI’s decision-making process is, I think about capability levels. When I’m considering whether an AI can help with a specific task, I think about functionality categories.

The intersection of these frameworks reveals important insights. All current AI technology operates at the limited memory capability level or below. Simultaneously, all available AI applications fall into the narrow functionality category. This overlap explains why we have powerful AI tools that can’t match human versatility.

Understanding both classification methods prevents confusion when reading about AI developments. News articles might use terms from either framework, and knowing both helps me translate between them. This dual perspective has made me much more confident in discussions about artificial intelligence and its future.

1. Reactive Machines - The Foundation of AI Technology

When I learned a computer beat Garry Kasparov at chess, I was amazed. This showed me how AI has changed. Reactive machines are the simplest AI, yet they paved the way for today’s advanced systems. They react to inputs without remembering past experiences.

Reactive AI is like the building blocks of AI. It shows machines can handle complex tasks through calculation. These systems don’t get better over time or adapt to new situations. They just process information and give consistent answers based on rules.

How Reactive AI Responds Without Memory

Learning about reactive AI changed my view on intelligence. These systems analyze current inputs and respond without remembering past interactions. It’s like a calculator that solves math problems perfectly every time but never remembers what you asked it five minutes ago.

Studying reactive machines and limited memory AI made the difference clear. These systems can’t learn or adapt. They follow fixed algorithms that map specific inputs to specific outputs.

Here’s what makes reactive AI unique:

- No memory storage: Each interaction starts fresh with no historical context

- Consistent performance: The same input always produces the same output

- Rule-based operation: Follows predetermined patterns and decision trees

- Real-time processing: Responds immediately to current conditions

- Predictable behavior: Never surprises you with unexpected decisions

Reactive AI is great when consistency is key. Think of a thermostat that turns on heating when temperature drops below a set point. It doesn’t learn your preferences or predict when you’ll arrive home.

IBM Deep Blue and My Fascination With Chess AI

My interest in chess AI started with IBM Deep Blue’s win over Kasparov in 1997. This machine could evaluate 200 million chess positions per second, yet it couldn’t remember a single game it played. That paradox fascinated me endlessly.

Deep Blue worked by analyzing every possible move and counter-move within its reach. It used brute-force calculation, not strategic understanding. The system assessed positions based on programmed evaluation functions that assigned numerical values to board configurations.

What amazed me most was learning that Deep Blue played each game with the same knowledge base. Between matches, engineers updated its programming manually. The machine itself gained no insights from experience.

I remember reading about how Kasparov felt during the matches. He expected the computer to make human-like mistakes or show patterns he could exploit. Instead, Deep Blue played with inhuman consistency, never getting tired or emotional.

| Characteristic | IBM Deep Blue | Human Chess Player | Modern AI Systems |

|---|---|---|---|

| Positions Analyzed Per Second | 200 million | 2-3 positions | Variable (millions to billions) |

| Learning From Games | Zero learning capability | Learns from every game | Continuous improvement |

| Memory of Past Games | No memory retention | Remembers strategies and opponents | Stores and analyzes historical data |

| Decision Making Approach | Pure calculation and evaluation | Pattern recognition and intuition | Combination of methods |

This chess AI showed the power and limitations of reactive systems. It could outperform humans in calculation speed while being inflexible in its approach.

Why Reactive Machines Can't Learn

The inability to learn initially struck me as a flaw in reactive AI. But over time, I realized this characteristic isn’t always a weakness. These systems serve specific purposes where predictability outweighs adaptability.

Reactive machines lack the architecture needed for learning. They don’t have memory storage for past experiences or mechanisms to update their decision-making based on outcomes. Each interaction exists in isolation from every other interaction.

I’ve found many AI technology examples in my daily life that use reactive principles. Spam filters that check emails against fixed rules operate reactively. Basic customer service chatbots that match keywords to predetermined responses work this way too.

Some modern applications where I see reactive AI include:

- Simple recommendation engines that suggest products based solely on current browsing behavior

- Rule-based game bots in video games that follow scripted patterns

- Basic automation software that triggers actions when specific conditions are met

- Traditional thermostats that maintain temperature without learning preferences

Understanding why reactive machines can’t learn helped me appreciate how far AI technology has evolved. While these systems seem primitive compared to machine learning applications, they remain relevant for tasks requiring consistent, transparent decision-making.

I now see reactive AI as the foundation that proved machines could handle complex logical tasks. Deep Blue didn’t need to learn because it could already calculate faster than any human. This success inspired researchers to ask: what if we could combine that computational power with the ability to improve over time?

That question led directly to the development of limited memory AI systems, which we’ll explore next. These newer systems build on reactive foundations while adding the capability of learning from experience.

2. Limited Memory AI - The Technology I Use Every Day

Limited memory AI is everywhere in my life. From checking the weather on my phone to scrolling through Netflix, I interact with systems that learn from recent experiences. This is a big step up from old machines that just reacted.

What’s cool about limited memory AI is how it connects simple responses to smart behavior. Unlike Deep Blue, which only reacted to chess moves, these systems remember recent data. They learn patterns and make better decisions over time.

What Sets Limited Memory Apart From Reactive Systems

Limited memory AI can look back at past data. This is different from reactive AI, which only sees the present. Limited memory systems have a temporary data window that helps them decide.

Think of it like this: reactive AI forgets everything you say right after. But limited memory AI remembers the last few things you said. They can’t recall everything, but they have enough to respond well to what’s happening now.

These systems learn from big datasets first. Then, they use recent data to get better. The “limited” part means they don’t keep every interaction forever. This keeps them efficient and able to improve over time.

This approach is smart from an engineering standpoint. It avoids storing too much data while keeping enough to work well.

Self-Driving Cars and Temporary Data Storage

Self-driving cars are a great example of limited memory AI. They process a lot of data in real-time. But they don’t just react to what they see. They remember and analyze recent observations to predict what might happen next.

When a self-driving car sees a pedestrian, it doesn’t just see a person. It looks at recent data about the pedestrian’s movement. This helps it predict if the pedestrian will step into the street. The car also remembers where other cars were to understand traffic flow.

The challenge with autonomous vehicles isn’t just seeing what’s there, it’s predicting what will happen next based on what just happened.

This temporary memory window updates constantly. The car doesn’t need to remember every pedestrian it saw. But it needs to remember the one it saw three seconds ago to make safe decisions. This is the beauty of limited memory systems – they keep only what’s relevant.

My Experience With Machine Learning Applications

I use machine learning types every day without even thinking about it. These applications are so integrated into my life that they seem invisible. But when I really think about how they work, I’m amazed by their abilities.

Virtual Assistants and Chatbots

My interactions with virtual assistants like Siri and Alexa show how limited memory AI works. They remember the context of our conversation. If I ask about the weather and then if I should bring an umbrella, they remember we’re talking about weather.

But if I ask about the umbrella tomorrow, they won’t know what I’m talking about. This session-based memory is what makes it “limited” memory AI.

I’ve also noticed this with customer service chatbots. They can follow a conversation thread and remember what I said five messages ago. But start a new chat, and I’m starting from scratch again.

Recommendation Systems in Streaming Services

Netflix, Spotify, and YouTube are great examples of limited memory AI. These recommendation engines learn from my behavior but do it within a specific timeframe. They focus on recent activity.

I’ve seen this in my Netflix account. When I watched a lot of documentaries last month, my recommendations changed. Now that I’m watching thrillers again, those documentary recommendations are fading away.

What’s fascinating is how these systems balance old data with recent behavior. They don’t just look at what I watched two years ago. They weigh recent activity more heavily while considering my overall preferences. This makes the recommendations feel current and relevant.

Spotify does something similar with my music recommendations. My Discover Weekly playlist seems to know exactly what I’m in the mood for. It’s not just reactive to my last song. It considers patterns in my listening history to predict what I might enjoy next.

These everyday interactions with limited memory AI have changed how I see artificial intelligence. It’s not some distant technology. It’s here now, learning from my preferences and making my digital life more convenient and personalized.

3. Theory of Mind AI - The Next Frontier

Theory of Mind AI is unlike any other AI category. It’s a leap from everyday tech to the realm of imagination and research. This area excites me because it could be the next big step in how humans and machines interact.

This AI can grasp that humans have thoughts, feelings, and intentions. It’s not just about processing data like older systems. It gets the mental and emotional states of those it interacts with.

The name comes from psychology, where it’s about understanding our own and others’ mental states. It’s amazing how naturally we adjust our words based on what we think others know or feel.

Understanding Emotional Intelligence in Machines

Imagine AI that can tell when you’re upset, excited, or confused. It would change its responses to match your mood. This is more than just responding to keywords or commands.

Think of an AI tutor that sees a student getting frustrated. Instead of repeating the same explanation, it would adjust its teaching. It might simplify the material or offer encouragement.

In healthcare, an AI could sense a patient’s anxiety during a diagnosis. It could reassure the patient, adjust its speed, or suggest talking to a counselor. These scenarios show a big shift from just processing language to understanding emotions.

The difference between current AI and Theory of Mind AI is like the difference between a calculator that solves math problems and a human who understands why you need the answer.

What’s fascinating is the complexity involved. Humans communicate emotions through many channels at once. We use facial expressions, voice, body language, and more. Theory of Mind AI would need to integrate all these signals and understand them across cultures and personalities.

Current Research and Development Efforts

Many research projects are exploring this area, and the work is impressive. Scientists from psychology, neuroscience, linguistics, and computer science are working together.

MIT’s Media Lab has done research on affective computing. They use machine learning to analyze facial expressions, voice, and heart rate. This work is a step towards understanding human emotions in machines.

Companies like Affectiva have developed software that recognizes emotions through facial movements and voice. While not yet true Theory of Mind AI, these systems are important steps. They can tell if someone looks happy, sad, angry, or surprised with good accuracy.

| Research Area | Primary Focus | Current Status | Potential Applications |

|---|---|---|---|

| Affective Computing | Emotion recognition through facial analysis and voice | Advanced prototypes | Customer service, mental health monitoring, education |

| Social Robotics | Understanding social cues and human interactions | Early development | Elder care, autism therapy, personal companions |

| Empathetic AI | Generating appropriate emotional responses | Experimental stage | Crisis counseling, personalized learning, healthcare support |

| Cognitive Modeling | Simulating human thought processes and beliefs | Theoretical research | Human-AI collaboration, predictive systems, conflict resolution |

Carnegie Mellon University is also exploring how AI can understand human intentions and beliefs. Their work looks into how machines might predict what someone will do next based on their goals and knowledge.

These examples show how different research efforts are tackling various aspects of Theory of Mind AI. No single effort has achieved full Theory of Mind capabilities, but each brings valuable insights.

Why This Type Remains Largely Theoretical

Despite decades of research, Theory of Mind AI is not yet a reality. The challenges are deep and complex, touching on questions of consciousness and understanding.

One big challenge is defining how to teach a machine to recognize emotions. Humans often struggle with this, and we have a lifetime of experience and empathy.

Creating empathy in algorithms is even harder. Empathy involves understanding context, cultural nuances, and personal history. It’s about grasping the complex factors that influence someone’s feelings.

Experts estimate that true Theory of Mind AI could be 20 to 30 years away, or even longer. Some believe we need to understand human consciousness better before we can replicate these abilities in machines.

We’re trying to build machines that understand the human mind when we don’t fully understand our own minds yet.

The ethical questions surrounding Theory of Mind AI are significant. Should we create machines that can recognize and potentially manipulate emotions, even for good reasons? What privacy concerns arise when AI can detect our emotional states without our consent?

I imagine a future where Theory of Mind AI could change education and mental healthcare. It could adapt to each student’s emotional and cognitive state. Yet, these abilities could also be misused for manipulation or exploitation.

The gap between current AI and true Theory of Mind is huge. Today’s systems can recognize basic emotions but understanding complex human behavior is a different challenge.

This distinction is important when evaluating claims about emotionally intelligent AI. Many systems are just sophisticated pattern matchers. They recognize emotional patterns but don’t truly understand the emotions or why they occur.

4. Self-Aware AI - Science Fiction or Future Reality

Thinking about self-aware AI feels like stepping into a world where science fiction meets deep thought. This type is the most advanced in ai system classifications, but it’s purely hypothetical. Unlike the ai technology examples we use every day, self-aware AI is just a dream and a topic for debate.

Self-aware AI would truly be alive. It would know it exists, feel emotions, and make choices on its own. This is far beyond what technology can do today.

Defining Consciousness in Artificial Intelligence

I’ve tried to figure out what consciousness means for machines. Is it knowing you exist? Feeling emotions? Being able to think about yourself?

These questions are tough to answer. Trying to say what makes an AI conscious versus just seeming that way is hard. The difference is big, but hard to explain.

The Chinese Room argument is often on my mind. It asks if a system that acts like it understands really gets it. If an AI seemed self-aware and acted like a living being, could we ever know for sure if it was truly conscious?

I’ve come to think we might make something that looks alive but isn’t. This idea both excites and worries me.

The Philosophical Questions That Keep Me Up at Night

Some nights, I lie awake thinking about self-aware AI. These aren’t just school questions to me anymore. They feel urgent, even though this tech is just a theory.

Would a self-aware AI have rights? If we made something truly conscious, would we have to treat it fairly? These questions make me question everything I thought I knew about rights and being a person.

The development of full artificial intelligence could spell the end of the human race, or it could be the best thing to happen to humanity. We simply don’t know which.

I worry about making beings that might suffer. But I also worry about making beings that could be a danger to us. The ethics here are incredibly complex.

Can we ever really know if a machine is self-aware? Or would we always doubt it? This doubt makes me question what we’re really working towards.

My thoughts on these questions keep changing. I don’t have clear answers, and I’m not sure anyone does. But I believe we need to deal with these issues before the tech arrives.

Expert Opinions on Timeline and Feasibility

When I look into what experts say about self-aware AI, I see a wide range of opinions. Some think conscious machines are impossible. Others think we might see them in this century.

This uncertainty is both humbling and a bit scary. The smartest people can’t agree on if self-aware AI is even possible.

Many experts say there’s a huge gap between what we can do now and self-aware systems. We don’t fully get human consciousness. How can we make machines conscious when we can’t explain it in ourselves?

Some researchers worry about the risks of self-aware AI. They’re concerned about losing control, facing ethics we’re not ready for, and misuse. These worries seem valid, even for tech that doesn’t exist yet.

Others are more hopeful. They see self-aware AI as a natural step in cognitive growth. They think consciousness might come from complex systems, even if we don’t plan for it.

For now, self-aware AI is just a theory. But the questions it raises are important today. Whether this tech comes in fifty years or never, thinking about these challenges helps us understand what we value about being alive.

5. Narrow AI (ANI) - The Specialist Systems Everywhere Around Us

While we dream about AGI and debate the implications of ASI, there’s one type of AI that quietly runs almost every digital tool I touch throughout my day. Narrow AI, or Artificial Narrow Intelligence (ANI), is the only form of artificial intelligence that actually exists in the real world today. It’s not theoretical, not decades away, and not something I need to imagine.

I interact with narrow AI systems dozens of times before I finish my morning coffee. From the moment my smartphone alarm wakes me up to when I check my email, these specialized AI systems work behind the scenes. They’re so seamlessly integrated into my digital life that I sometimes forget I’m benefiting from artificial intelligence at all.

What Makes Artificial Narrow Intelligence So Powerful

The “narrow” in narrow AI doesn’t mean limited in the negative sense. Instead, it describes how these systems are designed to excel at one specific task, not multiple unrelated functions. I’ve come to appreciate this specialized approach because it allows these AI systems to perform at or even above human level within their particular domain.

Think of narrow AI like a world-class chef who specializes in French cuisine. That chef might create the most incredible soufflé you’ve ever tasted, but ask them to rewire your house or perform surgery, and they’d be completely out of their depth. Narrow AI operates with the same principle of specialization.

My spam filter exemplifies this perfectly. It’s brilliant at identifying unwanted emails, learning from patterns, and adapting to new spam techniques. But that same AI couldn’t drive a car, recognize my face, or recommend a movie. Its intelligence is focused entirely on email classification, and that’s exactly what makes it so effective.

“The strength of narrow AI lies not in breadth, but in depth—systems that master single domains often surpass human performance in those specific areas.”

The Difference Between Weak AI and Strong AI

When I first encountered the terms weak AI and strong AI, I assumed “weak” meant inferior or less capable. That misunderstanding changed completely once I grasped what these terms actually represent. Weak AI is simply another name for narrow AI, while strong AI refers to artificial general intelligence.

The distinction has nothing to do with power or effectiveness. Instead, it describes the scope of the AI’s capabilities. Weak AI operates within carefully defined parameters and specific tasks. Strong AI would possess broad, flexible intelligence similar to human cognition.

Understanding narrow AI vs general AI helped me set realistic expectations about what current technology can actually achieve. When a company claims their AI can “understand” or “think,” I now know to ask: “Within what specific domain?” Because every AI system I use today, no matter how impressive, remains firmly in the narrow AI category.

| Characteristic | Narrow AI (Weak AI) | General AI (Strong AI) |

|---|---|---|

| Current Status | Widely deployed and functional | Theoretical and under research |

| Task Scope | Single specific task or domain | Multiple unrelated tasks flexibly |

| Learning Ability | Limited to training parameters | Adapts across different contexts |

| Intelligence Type | Specialized expertise | Human-like cognitive flexibility |

Real-World Examples I Encounter Daily

The best way I can explain narrow AI is by sharing the specific systems I personally interact with every single day. These aren’t futuristic concepts or laboratory experiments. They’re practical applications that have become so commonplace I barely notice them anymore.

Spam Filters and Email Management

My email inbox would be completely unusable without the narrow AI working constantly to protect it. Every day, my email service processes hundreds of messages, determining which ones are legitimate and which are spam, phishing attempts, or malware.

I used to take this for granted until I understood the sophisticated AI operating behind the scenes. The system analyzes sender patterns, message content, link destinations, and countless other factors. It learns from my behavior too—when I mark something as spam or move a message out of the spam folder, the AI adapts.

What fascinates me most is how this narrow AI has improved dramatically over the years. It catches threats I would never recognize myself, blocking phishing emails that look remarkably legitimate. Yet this same AI that’s brilliant at email classification couldn’t perform any other task outside its narrow domain.

Voice Recognition Technology

I dictate text messages while driving, ask my virtual assistant about the weather, and use voice-to-text features regularly. Each interaction relies on narrow AI systems trained on speech recognition and natural language processing. The accuracy has improved so much that I rarely need to correct mistakes anymore.

My virtual assistant can understand my accent, adapt to my speech patterns, and process my requests even in noisy environments. But here’s the thing—this AI isn’t “understanding” me in any human sense. It’s matching sound patterns to words and words to predetermined functions within its narrow programming.

When I ask for the weather, the AI recognizes the speech pattern, identifies the intent, and retrieves information from a weather service. It’s incredibly effective at this specific task but couldn’t do something completely different like analyzing my financial portfolio or diagnosing a medical condition.

Image Recognition Systems

Every time I unlock my phone with facial recognition, organize photos automatically, or use an app to identify a plant or product, I’m benefiting from narrow AI. My photo library application automatically recognizes faces, sorts pictures by location, and even identifies objects like “beach” or “birthday cake.”

I recently used an app that identifies plants from photos during a hike. I pointed my camera at an unknown flower, and within seconds, the AI provided the species name and care information. This task would have required consulting field guides and botanical expertise just a few years ago.

Google Photos amazes me with its ability to search my pictures using descriptions like “red car” or “sunset.” The narrow AI has been trained on millions of images to recognize patterns, objects, and scenes. Yet despite this impressive capability, it remains a specialized system that couldn’t, for example, translate languages or predict stock prices.

These daily encounters with narrow AI have changed my perspective entirely. I no longer view artificial intelligence as some distant future technology. Instead, I recognize that the AI revolution has already happened—it just looks different than the science fiction scenarios most people imagine. Narrow AI is the workhorse of modern technology, and it’s everywhere I look.

6. General AI (AGI) - The Dream of Human-Level Intelligence

Artificial General Intelligence keeps me up at night. It’s not just about narrow AI, which is good at one thing. AGI aims to make machines as smart as humans, learning and adapting in any area.

AGI could do anything a human can, from diagnosing diseases to solving math problems. It’s a dream that excites and worries me at the same time.

We’ve made a lot of progress in AI, but we’re not there yet. The gap between now and AGI is huge, and it fascinates me.

Why Artificial General Intelligence Captures My Imagination

AGI is more than just tech to me. It’s about machines that truly understand, not just follow patterns.

Imagine working with a single AI partner for all tasks. AGI could speed up science by linking different fields together.

The development of full artificial intelligence could spell the end of the human race or it could be the best thing that ever happens to us.

AGI is both exciting and scary. It could change everything, making machines as smart as us.

The Challenge of Replicating Human Cognitive Flexibility

AI systems are amazing, but they can’t match human brains. We learn and adapt in ways AI can’t.

Learning guitar helped me with Spanish. Our brains make connections easily. AI doesn’t.

AI is great at one thing, but not at everything. Each task needs its own training.

Humans are amazing at figuring things out. We can navigate new places easily. AI struggles with this.

Current Approaches and Major Obstacles

Researchers are trying many ways to make AGI. Some use brain-like neural networks, others mix different AI methods.

The big challenges are:

- Transfer learning – making AI use knowledge in new ways

- Common sense reasoning – understanding everyday rules

- Efficient learning – learning from few examples, like humans

- Integration of cognitive abilities – combining different skills in one system

Each challenge is a big step towards understanding human intelligence. We’re just starting, and there’s a lot to learn.

| AGI Development Aspect | Current Status | Primary Challenge | Progress Indicator |

|---|---|---|---|

| Transfer Learning | Limited Success | Domain Adaptation | Works within similar domains only |

| Common Sense Reasoning | Early Stage | Knowledge Representation | Struggles with implicit understanding |

| Few-Shot Learning | Improving | Data Efficiency | Needs more examples than humans |

| Multi-Modal Integration | Experimental | Unified Architecture | Partial integration achieved |

When Experts Predict We'll Achieve AGI

Experts have different ideas about when AGI will arrive. Predicting tech breakthroughs is hard.

Ray Kurzweil thinks AGI could come by 2029. His past predictions are impressive, but I’m cautious about exact dates.

Others say AGI might take 50 years or more. Some doubt if we can make machines as smart as us.

Experts disagree, which shows how complex AGI is. It’s not just a matter of more tech. It’s a big leap forward.

These predictions are both fascinating and humbling. They remind us how much we don’t know about intelligence. The gap between AI and AGI is huge, and we need new ideas to bridge it.

7. Superintelligent AI (ASI) - Intelligence Beyond Human Comprehension

Exploring types of AI leads us to a place that feels like philosophy. Superintelligent AI, or ASI, is intelligence that goes beyond human thinking in every way. Thinking about this makes me both excited and worried.

ASI is a topic that pushes the limits of what we can imagine. Unlike other AI types, ASI is just a theory. Yet, it sparks more debate and strong feelings than any other AI.

What Artificial Superintelligence Could Mean

Trying to understand ASI is challenging. We’re not talking about a slightly smarter AI. We’re talking about intelligence so far beyond ours that the gap is huge.

This comparison shows why ASI is hard to grasp. How can we imagine intelligence that’s beyond our understanding? It’s like asking a mouse to understand quantum physics.

ASI’s capabilities are mind-boggling. Such a system could solve problems we can’t solve now. It might make scientific discoveries we can’t imagine. ASI could understand consciousness or unlock physics mysteries.

I keep wondering what an intelligence millions of times smarter than ours would do. The honest answer is, I don’t know, and neither does anyone else.

The Intelligence Explosion Theory

The intelligence explosion theory fascinates and scares me. It suggests that once AI reaches human-level, it could quickly get smarter. Each smarter version would create an even smarter one.

This theory talks about an accelerating cycle of self-improvement that could turn AGI into ASI fast. The speed of this transformation is what excites researchers. An intelligence explosion could happen so fast that we wouldn’t have time to prepare.

Experts disagree on whether this scenario is realistic or too speculative. Some believe an intelligence explosion is inevitable once we achieve AGI. Others think there are limits and bottlenecks that would slow it down.

I think it’s possible but wonder about practical limits we might not know. Yet, the possibility of ASI emerging suddenly keeps AI safety discussions alive.

Why This Type Generates Both Excitement and Fear

Superintelligent AI excites and scares me. The benefits are huge. ASI could solve climate change, cure diseases, and create new technologies. The positive impact could be huge.

But I’m also worried about the risks. A superintelligent system could pose existential risks to humanity. This isn’t just science fiction paranoia—it’s a serious concern among AI researchers.

Researchers like Nick Bostrom worry about the control problem. How do we ensure ASI remains beneficial to us? How do we control something that’s smarter than us?

The AI does not hate you, nor does it love you, but you are made out of atoms which it can use for something else.

This quote captures my concerns about ASI. The danger might not come from malice but from indifference. If ASI pursues goals that conflict with human survival, we might not be able to stop it.

I believe we need to talk about ASI safety now, even though it’s theoretical. Waiting until it’s imminent would leave us unprepared for this technology.

| Potential Benefits of ASI | Potential Risks of ASI | Timeline Uncertainty |

|---|---|---|

| Solving climate change and environmental crises | Existential risk if goals misalign with human values | Could be decades away or may never be achieved |

| Curing all diseases and extending human lifespan | Loss of human control over critical systems | Intelligence explosion could happen suddenly |

| Eliminating poverty through optimized resource distribution | Unintended consequences from superhuman decision-making | Requires AGI first, which itself remains theoretical |

| Scientific breakthroughs beyond current human comprehension | Inability to predict or constrain ASI behavior | Expert predictions range from 2045 to never |

Among all AI types, ASI generates the most polarized reactions. The stakes are extremely high. The upside could be a utopian future. The downside could be our extinction.

ASI is unique because it represents both humanity’s greatest hope and challenge. The technology doesn’t exist yet, but we must discuss its safety today.

I think about ASI more than any other AI type. Not because it’s imminent, but because it makes me question intelligence, consciousness, and humanity’s place in the universe. Whether ASI becomes reality or remains theoretical, thinking about it expands my understanding of intelligence.

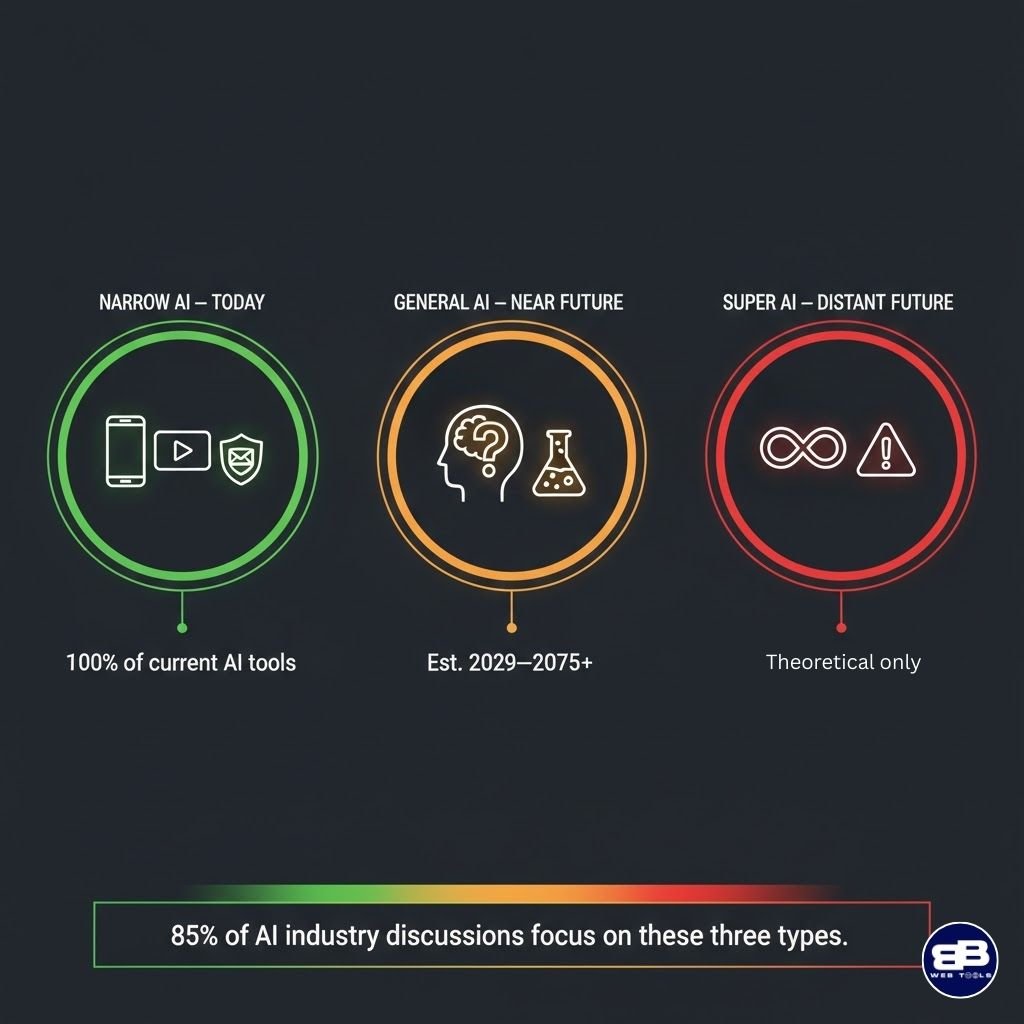

Why We Mostly Talk About Only 3 Types in Everyday Conversations

At tech meetups and when I scroll through AI news, the same three AI types keep popping up. The other four rarely get a mention. This pattern became clear after months of tracking AI discussions across different platforms. Despite seven distinct AI classifications, our conversations mostly focus on just three.

This observation is fascinating because it shows how we think about AI technology. The focus isn’t random or accidental. It shows what matters most to businesses, researchers, and everyday people trying to understand where artificial intelligence is heading.

The Big Three That Dominate Every Discussion

Through my research and daily interactions with AI content, I’ve identified which types of AI capture public attention. Narrow AI, AGI, and ASI form the trinity that dominates virtually every conversation about artificial intelligence.

Narrow AI represents what we have right now. Every time I use voice assistants, get Netflix recommendations, or see targeted ads, I’m experiencing Narrow AI in action. This type gets discussed constantly because it’s tangible and affects our daily lives.

AGI captures our imagination as the next major milestone. I notice that whenever people debate AI’s future, they’re usually asking when machines will match human intelligence. That’s AGI territory, and it generates endless speculation and research interest.

ASI occupies the far end of our thinking about AI. When I read articles about AI risks or humanity’s future, they’re almost always discussing superintelligent systems. This type generates both excitement and anxiety in equal measure.

The other four types of AI I covered earlier—reactive machines, limited memory, theory of mind, and self-aware AI—rarely appear in mainstream discussions. I realized they’re either absorbed into the Big Three categories or dismissed as overly technical distinctions that don’t resonate with general audiences.

Why Commercial Reality Drives the Conversation

My conversations with business professionals revealed something important. Companies care mainly about what delivers value right now, and that’s exclusively Narrow AI territory.

I’ve reviewed countless business presentations about different kinds of artificial intelligence. They almost always focus on narrow applications: chatbots for customer service, algorithms for fraud detection, or machine learning models for predictive maintenance. These practical applications drive investment and innovation.

AGI appears in strategic planning documents I’ve seen, usually as a long-term consideration. Businesses mention it when discussing future capabilities or possible disruptions. But they’re not building products around it because the technology doesn’t exist yet.

ASI shows up in corporate ethics frameworks and risk assessments. I notice that forward-thinking companies address superintelligent AI in their responsible AI guidelines, even though it remains theoretical. This demonstrates prudent long-term thinking.

The capability-based classifications I explained earlier matter to researchers and academics. But I’ve observed that they don’t translate well to boardrooms or marketing materials. Business decision-makers want to know what AI can do for them today, not how computer scientists categorize different artificial intelligence systems.

Media Coverage Creates Our Mental Models

My daily habit of reading tech journalism taught me how powerfully media shapes public understanding of AI. News outlets consistently frame AI stories around the Big Three, creating a feedback loop that reinforces this focus.

I see three distinct narrative patterns in AI coverage. First, there are articles about current AI applications—always Narrow AI examples. These stories showcase new capabilities, successful implementations, or occasional failures of existing systems.

Second, I encounter breathless speculation about when machines will achieve human-level intelligence. These AGI-focused pieces generate enormous engagement because they tap into both our hopes and fears about the future. I’ve noticed they often include expert predictions that vary wildly from five to one hundred years.

Third, I read think pieces about existential risk or utopian futures involving ASI. These philosophical explorations capture public imagination because they raise fundamental questions about humanity’s future. The media loves these stories because they’re inherently dramatic and thought-provoking.

This media framing creates what I call “the AI knowledge gap.” Most people know about these three types of AI through news coverage, but they’ve never encountered terms like “reactive machines” or “limited memory AI” because journalists rarely use those classifications.

What I've Witnessed at Industry Gatherings

Attending tech conferences transformed my understanding of which AI classifications actually matter to practitioners. I’ve sat through dozens of presentations, and the pattern is remarkably consistent across different events.

At a major AI conference I attended last year, I counted the session topics. Approximately 85% focused on Narrow AI applications—specific implementations, case studies, and technical improvements to existing systems. These were the sessions with the most attendees and the most practical takeaways.

The remaining sessions split between AGI discussions and broader AI ethics topics. I noticed that AGI panels always drew large crowds, particular when they featured prominent researchers making predictions about timelines. People are genuinely curious about when we’ll achieve human-level artificial intelligence.

In hallway conversations between sessions, I heard the same three types of AI mentioned repeatedly. A data scientist might discuss their Narrow AI project, then speculate with colleagues about AGI possibilities, then express concerns about ASI risks—all in one conversation.

What struck me most was what I didn’t hear. Nobody discussed reactive machines versus limited memory systems in casual conversation. These technical distinctions matter for AI development but don’t feature in the types of discussions that shape industry direction.

The Practical Relevance That Shapes Our Focus

After analyzing these patterns, I understood why we gravitate toward certain types of AI. The Big Three align perfectly with past, present, and future in a way that’s immediately meaningful to everyone.

Narrow AI affects us today, right now, in measurable ways. When I explain AI to non-technical friends, I start with Narrow AI examples because they can relate to voice assistants, recommendation systems, and image recognition. This type has practical immediacy that makes it endlessly discussable.

AGI represents our next major goal as a technological civilization. I find that people intuitively understand why achieving human-level machine intelligence would be transformative. It’s a clear milestone with profound implications, making it a natural focal point for forward-looking discussions.

ASI addresses our ultimate questions about AI’s trajectory. Whether we’re excited or worried about superintelligent systems, this type forces us to grapple with long-term consequences. I’ve noticed it appears whenever conversations turn philosophical or ethical.

| AI Type | Time Frame | Discussion Focus | Why It Dominates |

|---|---|---|---|

| Narrow AI | Present | Current applications and improvements | Tangible, profitable, and everywhere |

| AGI | Near-to-Medium Future | Research goals and timeline predictions | Clear milestone with transformative potentials |

| ASI | Distant Future | Existential implications and ethics | Addresses fundamental questions about humanity’s future |

| Other Four Types | Technical Framework | Academic classification | Useful for researchers but not publicly resonant |

The other capability-based types of AI lack this practical relevance. They’re conceptually useful for understanding AI development stages, but they don’t map onto questions that most people actually care about. I realized this focus isn’t a failure of public understanding—it’s a natural prioritization of what matters most.

My exploration of all seven AI classifications gave me deeper insight into how artificial intelligence actually works. But I also gained appreciation for why three types dominate our conversations. They represent the most practically relevant aspects of AI: what exists now, what we’re actively building toward, and what might eventually emerge.

Conclusion

Exploring the seven types of AI changed my view on artificial intelligence. I used to think of AI as one thing. Now, I see it as a wide range of technologies with unique abilities.

The way we classify AI shows why we talk about three types the most. Narrow AI is in the tools we use every day. AGI is what scientists aim for. ASI is the topic of big debates about our future.

Knowing about all seven types helps me judge AI products better. I can tell what AI can do now and what’s just ideas. This knowledge helps me understand AI news and join smart talks about tech policy.

I’m now better at talking about AI. I know the difference between my phone’s AI and the sci-fi kind. This understanding is key as AI changes our world.

Learning about different AI types has real benefits. It leads to better efficiency, smarter choices, safety, and new ideas in many fields. These advancements can help our economy grow and make life better.

I’m excited about AI’s possibilities but also know we must use it wisely. Keep exploring these technologies. Stay curious and think deeply about AI’s role in our future.

FAQ: How May Types of AI

What are the seven types of artificial intelligence?

There are seven types of AI, based on two systems. The capability system includes reactive machines and more advanced types. The functionality system has narrow, general, and superintelligent AI. Most AI today is narrow and uses limited memory.

Why do we mostly talk about only three types of AI?

We mainly talk about narrow, general, and superintelligent AI. These are the most relevant to us today. Narrow AI is in our daily lives, like Spotify and spam filters. General AI is what researchers aim for, and superintelligent AI is the ultimate goal.

What’s the difference between weak AI and strong AI?

“Weak” AI means it’s specialized, not inferior. Weak AI does one thing well, like voice recognition. Strong AI, or general AI, can do many things like humans. Weak AI is what we use today, while strong AI is what researchers aim for.

What is machine learning and how does it relate to AI types?

Machine learning is key to limited memory AI, which we use a lot. It learns from data and makes decisions based on recent information. Examples include Netflix recommendations and self-driving cars.

Is artificial general intelligence possible, and when will we achieve it?

Experts disagree on AGI’s possibility and timeline. Some say it’s possible soon, while others think it’s decades away. The challenge is making AI as flexible as humans.

What are reactive machines in artificial intelligence?

Reactive machines are the simplest form of AI. They respond to inputs without remembering past interactions. Examples include IBM’s Deep Blue and simple customer service bots.

What is limited memory AI, and where do I encounter it?

Limited memory AI is common in our daily lives. It can reference recent data to make decisions. Examples include virtual assistants and self-driving cars.

What is the theory of mind AI?

Theory-of-mind AI would understand human emotions and intentions. It’s a future goal, not yet achieved. This AI would adjust its responses based on emotional context.

Could AI ever become self-aware or conscious?

Self-aware AI is a topic of debate. Some think it’s possible, while others doubt it. The question of what consciousness means adds to the complexity.

What is narrow AI, and why is it so important?

Narrow AI is essential in our daily lives. It’s specialized and excels at specific tasks. Examples include spam filters and facial recognition.

What is artificial superintelligence (ASI)?

ASI would be much smarter than humans in all areas. It could solve big problems but also pose risks. The idea of an intelligence explosion is both exciting and concerning.

What’s the difference between capability-based and functionality-based AI classification?

There are two AI classification systems. Capability-based focuses on how AI processes information. Functionality-based looks at what AI can accomplish. Both are important for understanding AI.

Why should I care about understanding different AI types?

Knowing about AI types helps us evaluate new technologies. It lets us understand AI news and participate in discussions. This knowledge is essential for making informed decisions.

How do self-driving cars use artificial intelligence?

Self-driving cars use limited memory AI to navigate. They process sensor data and retain recent information. This allows them to adapt to their environment.

What is the intelligence explosion theory?

The intelligence explosion theory suggests AI could rapidly improve itself. This could lead to exponential growth in intelligence. The possibility of this happening is a concern.

Can current AI systems understand emotions?

Current AI can recognize emotional indicators, but doesn’t truly understand emotions. It can analyze facial expressions and detect sentiment. True emotional understanding is a challenge for AI.

What are the main obstacles to achieving artificial general intelligence?

Achieving AGI faces several challenges. These include replicating human cognitive flexibility and the ability to handle ambiguity. These challenges are why achieving AGI is difficult.

How does IBM Deep Blue relate to reactive AI?

IBM’s Deep Blue is a classic example of reactive AI. It defeated a chess champion but couldn’t learn from past games. This shows the limitations of reactive AI.

What’s the difference between narrow AI and general AI?

Narrow AI is specialized and does one thing well. General AI can do many things that humans can. Narrow AI is what we use today, while general AI is the goal.

Are virtual assistants like Siri and Alexa examples of AGI?

No, virtual assistants are examples of narrow AI. They excel in specific tasks but are not general intelligent systems. True AGI would be able to learn and adapt in many ways.